AI Approval Matrix Template for Internal Teams in 2026

Most AI risk starts with a simple gap: no one knows who can approve a use case. Teams adopt tools fast, then legal, IT, and operations scramble after the fact.

A practical AI approval matrix template fixes that gap. It gives every internal team one place to log the use case, name the owner, rate the risk, and route review work before a tool touches company data.

In 2026, that structure isn’t red tape. It’s how you move faster without losing control.

What an AI approval matrix needs in 2026

In many companies, the first failure isn’t the model. It’s the process. Marketing tests a writing tool, HR pilots a screening assistant, support adds an AI reply feature, and nobody uses the same rules. That creates shadow AI, duplicate reviews, and weak audit trails.

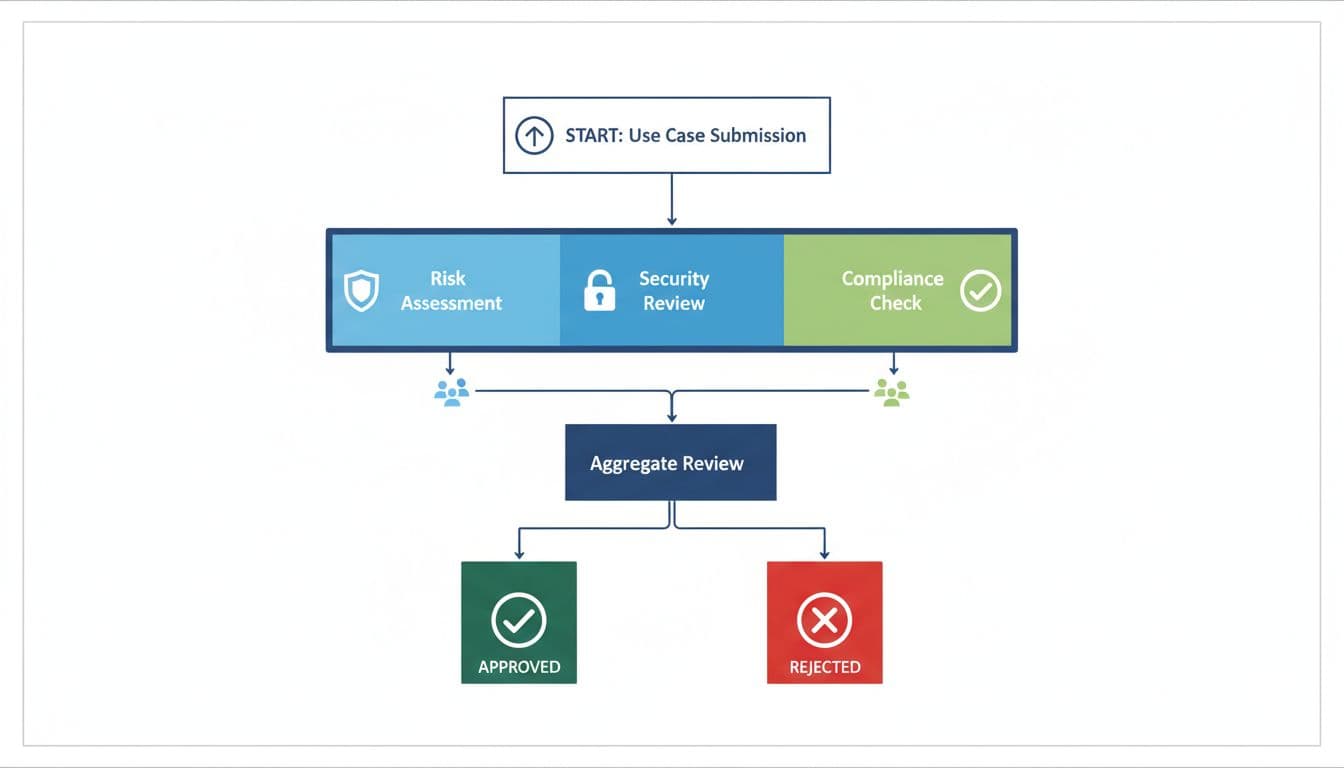

Your matrix should sit at the front door of AI use. Every request needs a named business owner, a short use-case statement, the data class, and the approved tool list. Then it moves through a cross-functional path. Many teams now use operations or an AI governance group for intake, while security, legal, privacy, and the business owner review based on risk. Pertama’s AI approval workflow design guide is a solid reference for mapping those handoffs.

Risk should reflect more than vendor reputation. Rate each use case by data sensitivity, automation level, user impact, external exposure, and whether the output affects regulated decisions. Employment, customer claims, pricing, and anything involving personal data need tighter control. That matters even more in 2026, with major EU AI Act obligations arriving by August and U.S. teams facing stricter state-level scrutiny for high-impact AI.

Low-risk work can move on pre-approved rails. High-risk work needs named reviewers and a clear stop signal. That balance matters because governance should filter decisions, not trap every request in the same queue.

If a team can’t name the data class, approved tool, and final approver, the request isn’t ready.

Keep the matrix simple enough to finish in a few minutes. If it turns into a long form, people will work around it.

Your ready-to-use AI approval matrix template

Use the matrix as a live register, not a one-time sign-off sheet. A shared spreadsheet works at first. Later, many teams move it into a service desk, GRC platform, or wiki with approval history.

Start with this version, then add a new row for every approved use case.

| Use case | Team owner | Data sensitivity | Risk level | Approved tools | Human review requirement | Legal/compliance review | Security review | Final approver | Review cadence |

|---|---|---|---|---|---|---|---|---|---|

| Draft campaign copy from public product info | Marketing Ops Director | Public or internal | Low | Company-approved enterprise LLM, approved image tool | Required before publish | Only for regulated claims | At tool onboarding, then annual | VP Marketing | Quarterly |

| Draft job descriptions, interview guides, and recruiter summaries | Head of Talent Acquisition | Confidential candidate and employee data | High | Approved HR copilot with training opt-out | Required for every output, no automated hiring decisions | Required | Required before launch and after major updates | CHRO | Quarterly |

| Suggest reply drafts from ticket history and knowledge base | Support Operations Manager | Customer data, may include PII | Medium | Approved support copilot with redaction and audit logs | Required before send | Required for regulated products or policy changes | Required | VP Support | Monthly for 90 days, then quarterly |

This starter matrix does two jobs. It routes approvals, and it records operating limits. Each row should describe one use case, not one tool. The same model can be low-risk in marketing and high-risk in HR because the data and business impact are different.

Review cadence shouldn’t be random. Tie it to model updates, vendor changes, data sensitivity, and business impact. If a vendor changes retention rules, admin controls, or training settings, the row should go back into review. Simple status labels also help: proposed, approved with conditions, paused, rejected, and retired.

Pair the matrix with an AI vendor approval checklist. The matrix decides whether a team may use AI for a task. The vendor review decides whether the tool itself meets your privacy, logging, access, and retention rules.

How the matrix changes by team

The structure stays the same across departments. Still, the review depth changes with the data, the decision, and who might be affected.

Marketing

Marketing usually moves fastest because many tasks use public or internal brand material. Drafting campaign copy, repurposing webinars, or summarizing research often lands in low or medium risk. Human review should stay in place before anything goes live, especially in regulated sectors where claims, disclosures, or image use can create legal exposure.

HR

HR needs tighter controls from day one. Candidate data, employee records, and performance content sit close to privacy and employment law. In 2026, many teams treat hiring, promotion, and performance use cases as high-risk by default. Keep humans in the loop for every output, block automated final decisions, and send new HR use cases through legal and security before launch.

Customer support

Support teams often get value fast, but the mistakes are public. Reply drafting, ticket summarization, and knowledge retrieval work best when the tool masks personal data and logs every suggestion. Human review should stay on for outbound replies unless the use case is narrowly scoped and pre-approved. If the system can trigger refunds, policy exceptions, or service commitments, raise the risk level and add tighter review.

If you want to connect the matrix to broader policy, training, and incident rules, this AI governance template is a helpful starting point.

A strong AI approval matrix template gives teams one shared language for speed and control. Marketing can move faster, HR gets tighter safeguards, and security and legal get a clean review path.

Keep the matrix short, update it when tools or laws change, and treat every approval as a record, not a hallway conversation. When AI requests stop living in scattered email threads, governance starts working.