An AI Access Control Policy Template for Internal Teams in 2026

Employees can reach powerful AI tools in seconds. That speed helps, until someone uploads customer data to the wrong model or gives an internal agent broad system access.

A solid AI access control policy stops that from becoming normal. It sets who can use which models, what data they can touch, when approval is needed, and how every action is logged. Use the draft below as a starting point, then review it with legal, security, and compliance teams.

What your policy needs to control in 2026

AI access control is no longer just about logins. Internal teams now use chat assistants, coding tools, model APIs, retrieval systems, agents, and fine-tuning workflows. Each one creates a different risk. A marketer using a public chatbot doesn’t need the same rights as a data scientist training an internal model.

Shadow AI, meaning unapproved tools used outside IT review, is the gap many policies miss. In 2026, the best policies tie AI access to identity, data class, and task. That means your policy should connect to your IAM stack, require MFA, block shared accounts, and use default-deny rules. It should also cover non-human identities, because AI agents and service accounts can read files, call tools, and trigger actions at scale. Microsoft’s overview of runtime authorization for AI agents is a useful reference for this shift.

Your policy also needs a governance lens. If your company sells into Europe or handles EU user data, 2026 matters because the EU AI Act’s high-risk obligations take effect in August. A plain-language comparison of NIST AI RMF, the EU AI Act, and ISO/IEC 42001 can help policy owners map internal controls to wider compliance needs. That matters for audits, board reporting, and vendor reviews.

If you can’t name who approved a model, what data it can access, and where its actions are logged, access isn’t under control.

Role-based access levels for employees and AI agents

RBAC still works best, but static roles alone don’t. Keep role-based access as the base, then add context such as device trust, training status, data sensitivity, and approval steps for higher-risk actions. The OpenAI RBAC guide shows how permissions can stay consistent across both dashboard and API use.

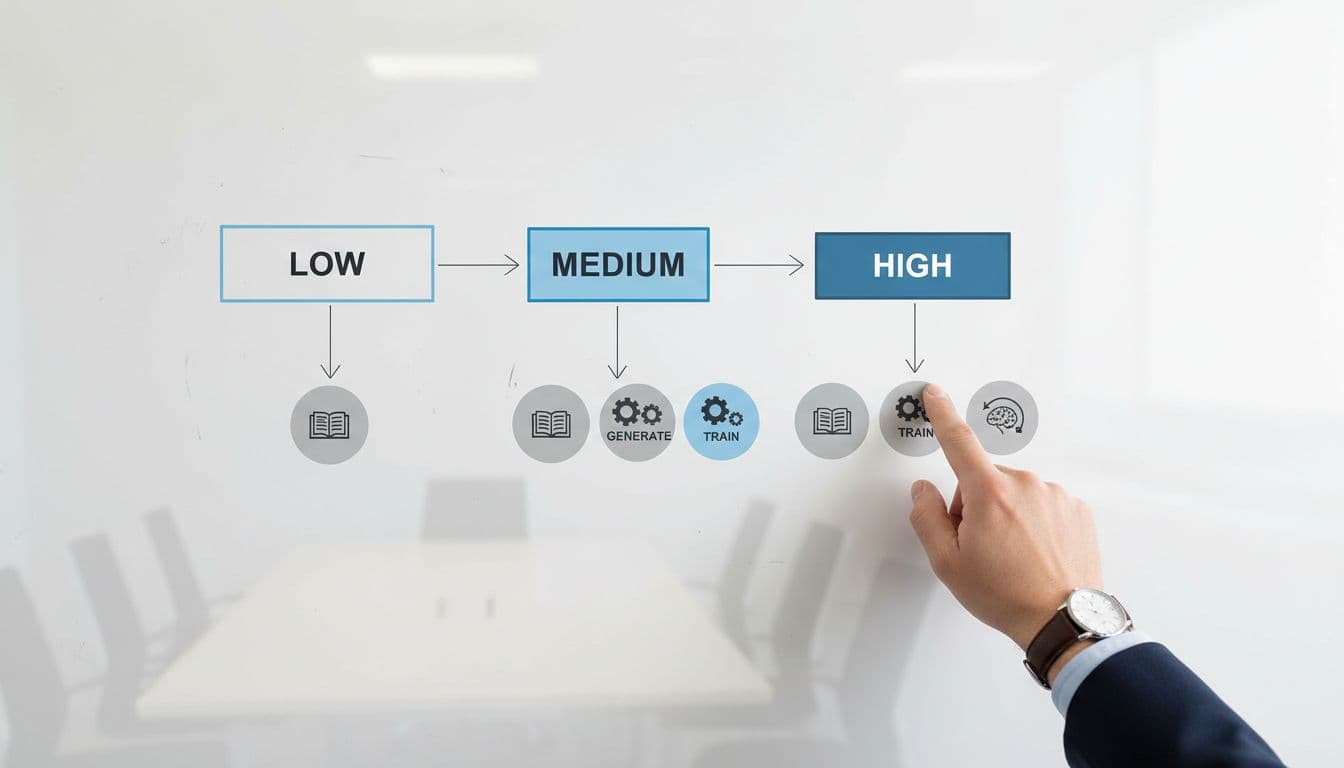

This simple model works for most internal teams:

| Access level | Typical users | Allowed actions | Extra controls |

|---|---|---|---|

| Low | Most employees | Prompt approved tools, view approved outputs, use enterprise chat | SSO, MFA, no uploads of restricted data |

| Medium | Analysts, product teams, power users | Connect approved data sources, build workflows, export low-risk outputs | Manager approval, logging, quarterly review |

| High | AI engineers, platform admins | Fine-tune models, manage connectors, change guardrails, create agents | PAM, ticketed approval, sandbox testing, full audit trail |

The main point is simple: most staff need low access, while high-risk model actions stay rare and tightly approved.

Give AI agents their own identities and narrower rights than the humans who create them. For example, an invoice agent may read one mailbox and one ERP endpoint, but it shouldn’t browse shared drives. If you’re building agent workflows, the RBAC guide for AI agents is useful because it starts with default deny and records allow, deny, and approval-required decisions.

A practical AI access control policy template

Use the text below as a working draft. Replace each placeholder with your own names, systems, and approval paths.

- “At [Company Name], access to AI systems is granted only for approved business use. This policy applies to employees, contractors, vendors, service accounts, and AI agents that use, administer, or integrate with [Approved AI Tools Register].”

- “All AI access must use corporate identity through [Identity Provider]. Shared accounts are prohibited. Multi-factor authentication is required for all users. Privileged AI administration must use [PAM Tool] and time-limited access.”

- “Users may only process data in AI systems according to the data classification standard at [Policy Name]. Restricted data, including [customer PII, PHI, source code, financial records, trade secrets], may only be used in tools approved for that class and with logging enabled.”

- “Data sent to third-party models must follow approved retention, residency, and training controls. [Training opt-out setting], [approved region], and [retention limit] must be applied where available.”

- “Public or personal AI tools may not be used for company work unless [Approval Authority] has approved them in writing. Security may block unsanctioned AI applications, browser extensions, and model endpoints.”

- “Model creation, fine-tuning, connector setup, and agent deployment require documented approval from [AI Owner], [Security Owner], and [Business Owner]. Each approved model or agent must have an owner, purpose, approved data sources, retention rule, and rollback plan.”

- “All AI interactions covered by this policy must create logs that record user or agent identity, tool used, data source touched, approval state, and timestamp. Logs must be retained for [X months] and reviewed [monthly or quarterly] by [Control Owner].”

- “Access reviews must occur every [30 or 90] days and after role changes, transfers, or termination. HR-triggered provisioning and deprovisioning must sync with [IAM Platform] so access is added, changed, or removed within [time period].”

- “Violations, suspected data exposure, unsafe model behavior, or unapproved AI use must be reported to [Security Contact] within [X hours].”

Pair this policy with an approved tools inventory. A policy without a tools list becomes a paper shield. If you want a companion document for employee behavior, this practical AI acceptable use template fits well beside an access policy.

Conclusion

A useful AI access control policy template does one job well. It turns broad governance goals into clear, reviewable permissions.

When the policy connects identity, data class, model risk, and audit logs, internal teams can move faster without guessing what’s allowed. In 2026, clarity matters more than length. A short policy with named owners, tight roles, and real enforcement will protect your teams better than a long document nobody can apply.