AI Pilot Evaluation Scorecard for Internal Teams in 2026

A pilot can impress people in a demo and still fail in production. In 2026, the hard part is no longer starting AI tests. It’s deciding which ones deserve budget, review time, workflow change, and executive trust.

If operations sees time saved, legal sees risk, and IT sees integration debt, you need one shared frame. A practical AI pilot evaluation scorecard gives internal teams a clear basis for scale, pivot, or stop.

Why a structured scorecard matters now

Only about a quarter of enterprises report that 40% or more of pilots are in production. That gap exists because pilots are still judged too often on output quality alone.

Internal teams have to carry the full load. They deal with governance, privacy, human review, vendor terms, support effort, and business impact. A scorecard turns those scattered concerns into one decision record.

Good scorecards use real workflows, real evidence, and weighted tradeoffs. That approach shows up in the Legal AI Evaluation Framework and in a practical value, risk, and readiness scorecard. The point is simple: a pilot should prove it can work inside the business, not only inside a sandbox.

If a pilot can’t pass security, governance, and workflow fit in a small test, it won’t get safer at scale.

The floor is also higher in 2026. If a pilot touches personal data, regulated decisions, or external content, governance can’t wait for production. Teams should map controls to existing policies and, where relevant, to NIST AI RMF, ISO 42001, and EU AI Act requirements. That keeps the review grounded in rules your business already has to follow.

A practical scorecard your team can use

Start with a 1 to 5 score for each dimension. Then multiply by the weight. A 1 means material gaps, a 3 means acceptable for a limited pilot, and a 5 means ready for controlled rollout.

This template works well for cross-functional reviews:

| Dimension | Weight | Evidence to review |

|---|---|---|

| Business outcomes | 25% | Baseline KPI, pilot lift, cost per task, error reduction |

| Governance and compliance | 15% | Use case classification, approvals, audit trail, policy fit |

| Data privacy and security | 15% | Data flow, retention, access control, red-team findings |

| Human oversight | 10% | Reviewer checkpoints, escalation path, override rules |

| Integration readiness | 10% | API fit, identity, monitoring, workflow handoffs |

| Reliability and quality | 10% | Accuracy, hallucination rate, latency, fallback behavior |

| Vendor risk | 10% | SLA, model transparency, contract terms, exit options |

| Adoption and change fit | 5% | User uptake, training effort, shadow AI reduction |

The main point is balance. A pilot with flashy outputs and weak controls shouldn’t pass. A pilot with strong controls and no business gain shouldn’t pass either.

What to collect before the review meeting

Bring a small evidence pack so the meeting stays factual.

- Baseline KPI data and the target improvement

- A real sample set, often 50 to 100 completed tasks

- Security and privacy review notes, including data retention

- User feedback, override rates, and training pain points

- Vendor documents, SLAs, and export or exit terms

For integration, look past the demo. Can the tool use your identity stack, log events, plug into core systems, and fail safely? That is often where pilots stall. The AI readiness scorecard for APIs and documentation is a useful reminder that basic technical fit can block scale long before model quality does.

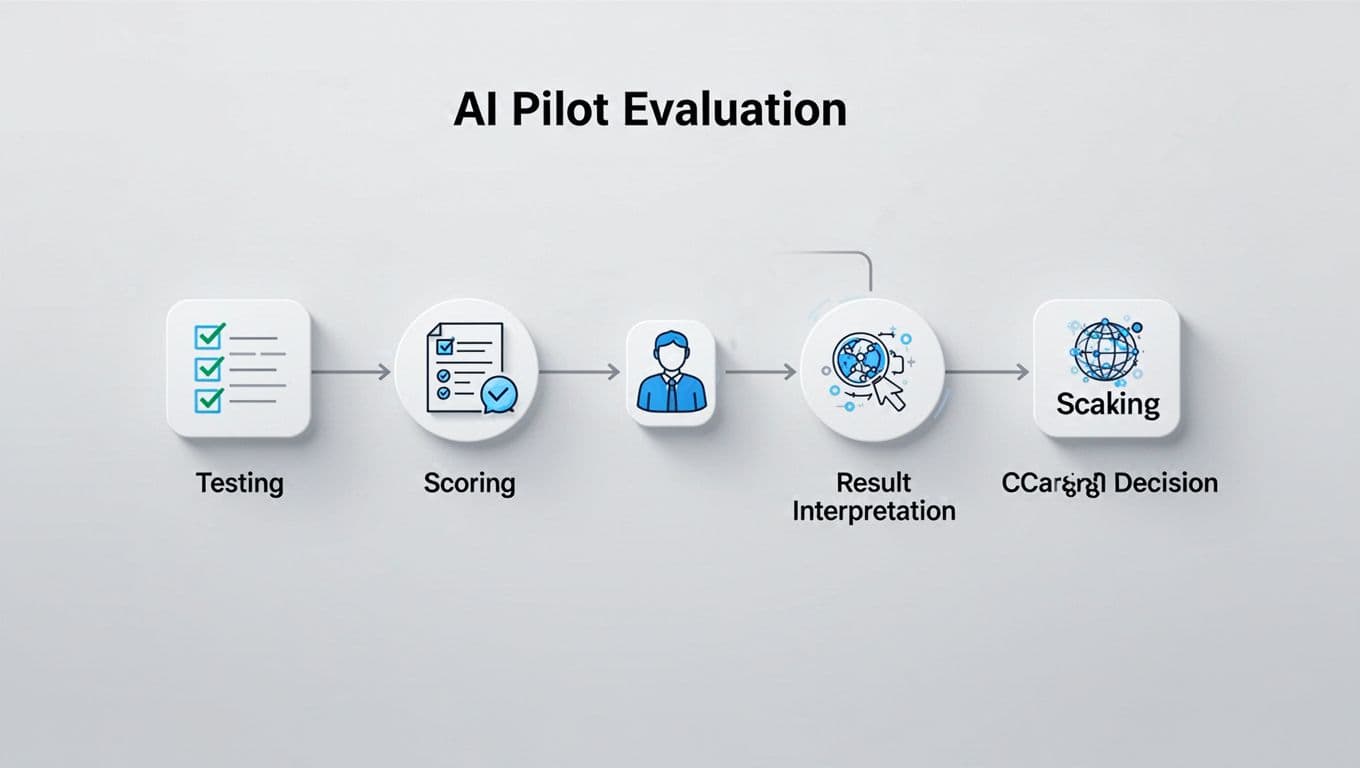

How to score and interpret results

The weighted total matters, but hard gates matter more. A pilot with 88 out of 100 and a failing privacy score is not a scale candidate. Strong user excitement also can’t offset weak human oversight in a regulated workflow.

Use a decision rule like this:

- Scale at 80 or above, if no gated dimension scores below 3.

- Pivot at 65 to 79, or if any gated area scores below 3.

- Stop below 65, or when the cost to fix gaps is larger than the likely gain.

Treat these as gated dimensions: governance, privacy and security, human oversight, and vendor risk. If one of them fails, the pilot stays contained until the issue is fixed and re-tested.

A quick example helps. Say a customer support drafting assistant cuts average handle time by 12% and agents like it. That sounds promising. Still, the security review finds prompt injection exposure, unclear retention settings, and no formal reviewer checkpoint for high-risk replies. The pilot earns a 76 overall, but privacy and oversight both score 2. The right decision is pivot, not scale.

Use evidence and trend lines, not a one-day snapshot. Review logs, incident tickets, override rates, escalation rates, and cost per successful task. For GenAI pilots, test guardrails directly. NIST’s ARIA pilot report is a useful reference because it evaluates whether systems follow defined guardrails, not whether outputs merely look good.

One more point matters in 2026. Browser-based and agent-style tools raise new control issues. Teams should test sensitive uploads, prompt injection defenses, copy-paste policy controls, and action approval steps before any wider rollout.

Conclusion

The best scorecards don’t slow progress. They stop weak pilots from soaking up trust, time, and budget.

When internal teams score outcomes, risk, oversight, and integration together, scaling becomes a disciplined decision. In 2026, the strongest signal is not a clever demo. It’s evidence that the pilot works, fits policy, and can hold up in day-to-day operations.