A Practical AI Data Retention Policy Template for Internal Teams in 2026

Most companies rolled out AI faster than they wrote rules for it. That gap gets risky fast when prompts, outputs, uploads, and vendor logs start piling up across teams.

A workable AI data retention policy template gives people clear limits. It tells staff what data they can use, how long records stay, when deletion starts, and who signs off when an exception appears.

Why 2026 needs tighter AI retention rules

AI data is messy because it rarely sits in one place. A single task can create prompt logs, output files, chat history, model feedback, admin logs, and copies inside a vendor platform. As AI Journal’s look at data lifecycle management in the age of AI notes, retention now sits at the center of privacy, cost, and risk.

The pressure is also coming from regulation. In 2026, high-risk AI teams in scope of the EU AI Act need automatic logging over the system life, and some logs must stay for at least six months. A plain-English summary of automatic log retention under Article 19 is a useful reference point, even if your use case falls outside the EU.

For internal teams, the policy goal is simple. Classify each AI data type, name its purpose, set a retention period, define a deletion trigger, and assign an owner. If your organization handles regulated data, public-sector work, or sensitive employee records, have legal counsel review the final policy language.

Keep data only while you can explain why it exists.

Customizable AI data retention policy template

Start with a short policy that people can use, not a giant manual nobody opens. The template below is built for internal operations, IT, security, HR, legal, and department leads.

Core policy language you can adapt

- This policy applies to [employees, contractors, departments] using [approved AI tools], internal models, embedded AI features, and third-party AI vendors connected to company data.

- Covered data includes prompts, uploaded files, outputs, conversation history, embeddings, feedback labels, training or fine-tuning data, usage logs, admin logs, and vendor support records.

- Staff may use only [permitted data classes] in approved tools. They may not enter [restricted data classes] into unapproved or personal AI tools.

- Unapproved AI use for company work is prohibited unless [approval process] is completed. Security may monitor, block, or investigate policy breaches.

- Each AI data class must have a documented business purpose, named owner, storage location, retention period, deletion method, and legal-hold rule.

- Company data may not be used to train vendor or public models unless [approving authority] gives written approval and the contract permits that use.

- Prompt and output logs may be retained for [30, 60, or 90 days] for security, debugging, abuse review, and audit support. Teams should mask personal or sensitive data where feasible.

- If litigation, HR review, security review, or regulatory inquiry begins, routine deletion pauses for the affected records until [release authority] lifts the hold.

- Exceptions must be documented in [system of record] and reviewed by [AI governance group] every [month or quarter].

Replace each bracketed field before approval, and give every data class one named owner.

A strong template also needs system metadata. For each AI tool, keep a one-page record with the tool name, owner, vendor, data types used, approved use case, retention schedule, deletion method, and contract limits on vendor training. That record is often what auditors ask for first.

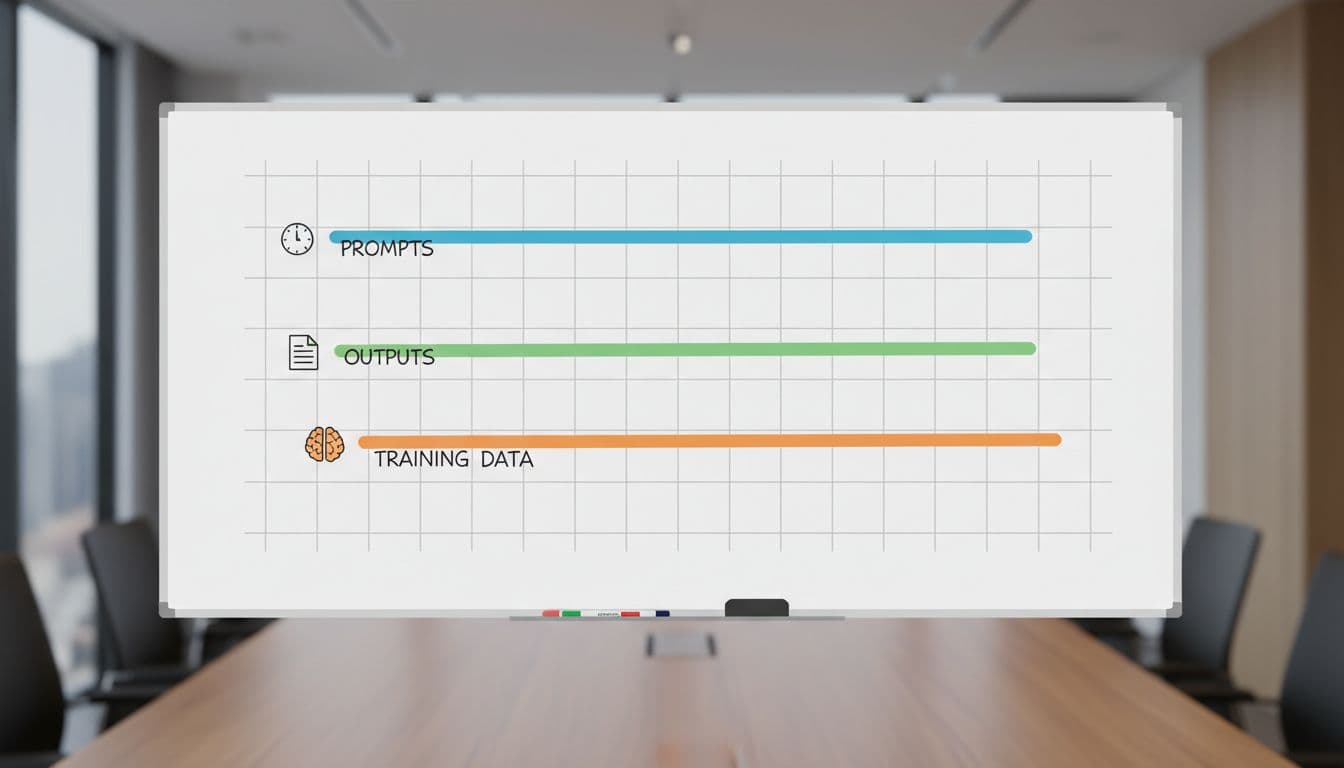

A simple retention schedule teams can start with

Use the table below as a starting point, then align it with your records schedule, contracts, and legal review.

| Data category | Typical purpose | Suggested retention | Notes |

|---|---|---|---|

| Prompts and uploads | Security review, debugging | 30 to 90 days | Shorter if sensitive data appears |

| Outputs stored in business systems | Work record or deliverable | Match the source record schedule | Retain only if the output matters |

| Copilot chat history | User continuity, support | 30 days by default | Allow deletion for sensitive work |

| Fine-tuning or training data | Model improvement, validation | Until model retirement plus review | Track consent, source, and lineage |

| Vendor admin and access logs | Incident response, audit | 180 to 365 days | Keep exportable logs |

| High-risk AI logs, if applicable | Compliance evidence | At least 6 months | Confirm with counsel |

The main point is consistency. If staff use an unapproved tool, do not create a shadow retention schedule around it. Treat that activity as a policy breach, keep only the minimum incident record needed, then delete under HR and security rules.

Governance, vendors, and audit readiness

A policy fails when nobody owns it. Cross-functional governance works better because AI data touches records, privacy, security, employment, and procurement at the same time.

- Operations should own business purpose and record classification.

- IT should own system inventory, storage locations, and deletion jobs.

- Security should own access logs, monitoring, and incident holds.

- Legal, HR, and compliance should review privacy duties, employment issues, and exception handling.

Vendor terms matter just as much as internal rules. Contracts should state whether the vendor can train on your data, how long support logs stay, when deletion happens after termination, and whether you can get audit logs on demand. In practice, your evidence should look more like a tamper-evident AI agent audit trail than a folder of screenshots.

The best audit file is boring in a good way. It has the policy, system inventory, retention schedule, exception log, vendor terms, and proof that deletion jobs ran when they should. That is what turns an AI data retention policy template into an operating control.

AI spread inside companies because it was useful. The teams that stay out of trouble in 2026 are the ones that pair usefulness with clear retention rules.

A good AI data retention policy template is short, owned, and tied to real deletion steps. If you can show what you keep, why you keep it, and when it goes away, the policy is doing its job.