EU AI Act Checklist for Internal Teams Before August 2026

August 2026 sounds close because it is. If your company uses AI in hiring, fraud checks, support workflows, or risk scoring, the hard part isn’t reading the law. It’s getting legal, product, security, and operations to work from the same facts.

A useful EU AI Act checklist starts with ownership and evidence, not policy slogans. By April 2026, some duties already apply, while the biggest high-risk obligations land on August 2, 2026. Because the rollout is phased, internal teams should verify dates and scope against the AI Act Service Desk timeline and the European Commission’s AI framework page.

Start with scope, roles, and the dates that matter

Don’t treat the Act like one deadline. It works more like a train schedule. Some stops passed already, and others arrive later.

As of April 2026, prohibited practices and AI literacy duties have applied since February 2025. Rules for new general-purpose AI, or GPAI, models started in August 2025. The main rules for many high-risk AI systems and Article 50 transparency obligations apply from August 2, 2026. Some product-linked systems and older GPAI obligations roll into 2027. Penalties also start to bite in 2026. The EU AI Compass timeline is a helpful cross-check, but official EU sources should stay at the top of your source list.

In plain English, a provider builds or places an AI system on the market. A deployer uses it inside the business. Many companies are both. That matters because the controls and paperwork can differ by role.

The biggest 2026 mistake is simple: teams know they use AI, but they can’t say which systems are in scope, who owns them, or what evidence exists.

Also, older high-risk systems don’t always trigger full duties on day one. If a pre-2026 system gets a major design change after August 2, 2026, the rules can bite harder. So your first move is a living AI inventory, not a memo.

The working EU AI Act checklist for internal teams

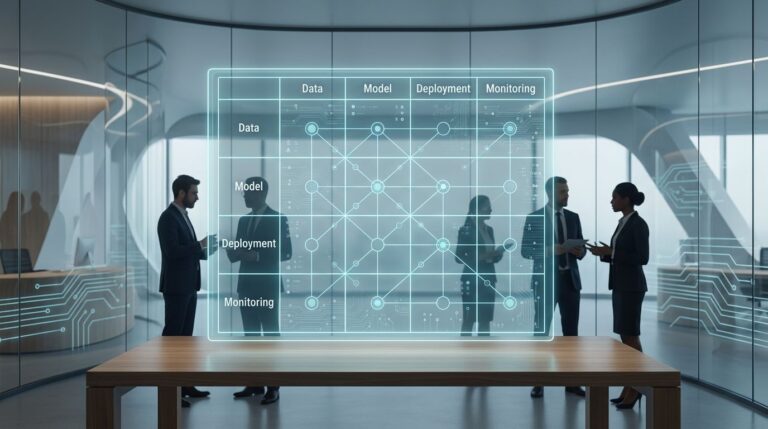

Start with one shared register across legal, procurement, product, security, and operations.

This is the core checklist most teams need now:

| Checklist item | Likely owner | What “done” looks like |

|---|---|---|

| Inventory every AI use case, model, vendor, and business process | AI governance lead with IT | One register with purpose, users, data, vendor, and system version |

| Map your legal role for each system | Legal counsel with product owner | Each use case tagged as provider, deployer, importer, or distributor |

| Screen for banned or restricted practices | Legal and compliance | Written decision on whether the use case is prohibited, high-risk, limited-risk, or minimal-risk |

| Review Annex III and product scope | Legal with risk manager | Clear rationale for why a system is or isn’t high-risk |

| Check vendor contracts and upstream evidence | Procurement with security | Contracts cover audit rights, incident notice, logs, support, and technical documents |

| Define human oversight and fallback steps | Business owner with operations | Named reviewers, escalation paths, and manual override points |

| Test data quality, logging, accuracy, robustness, and security | Data science and security | Test results, control owners, and remediation tickets |

| Set incident and post-market monitoring routines | Compliance with support teams | A process for issue intake, investigation, and reporting after launch |

If you need a practical benchmark, this high-risk systems checklist is a useful outside reference. Still, your internal register should stay tied to your own systems and evidence.

Build the evidence pack before enforcement

A checklist without proof is like a fire drill with no exits marked. Regulators, auditors, and customers won’t grade intent. They’ll look for records.

For internal teams, the evidence pack usually includes system descriptions, data sources, test results, known limits, approval records, user instructions, vendor due diligence, and training records. For high-risk use cases, keep a risk-management file, logging setup, human oversight design, and a post-market monitoring plan. That last term simply means how you’ll watch the system after release, track issues, and fix them.

Examples help. If HR uses AI to rank applicants, legal and HR should save the use case assessment, oversight steps, and bias testing record. If operations uses a support bot, the owner should keep prompts, guardrails, escalation rules, and transparency wording. If finance uses a fraud model, model risk and security teams should document monitoring thresholds and incident triggers.

AI literacy also can’t wait. Since February 2025, teams involved with AI need enough training to use it responsibly. That doesn’t mean everyone needs a law seminar. It means recruiters, analysts, engineers, and managers should know what the system does, where it can fail, and when to escalate. This 2026 action plan gives a solid example of how cross-functional ownership can be assigned.

What to do in the next 30 days

Most programs stall because they start too wide. Keep the first month narrow and evidence-based.

- Build a list of your top 10 AI use cases by business impact and risk, then assign one owner to each.

- Run a quick triage for prohibited, high-risk, GPAI-related, and low-risk use cases, then flag gaps that need legal review.

- Ask every vendor for technical documents, logging details, incident terms, and model change notices.

- Pick one workflow, such as hiring or customer support, and run the full checklist end to end.

- Schedule short AI literacy sessions for the people who approve, operate, or monitor those systems.

If your team can’t answer who owns a system, what data it uses, and how a person can step in, you’re not ready yet.

Keep the checklist live after August 2026

August 2026 isn’t a paperwork date. It’s a proof date.

The strongest EU AI Act checklist is the one your teams can maintain, defend, and update as the phased rules keep rolling into 2027.