AI Vendor Risk Assessment Template for Procurement Teams in 2026

AI buying got harder fast. One AI tool can touch customer data, call outside models, and create outputs your team must defend.

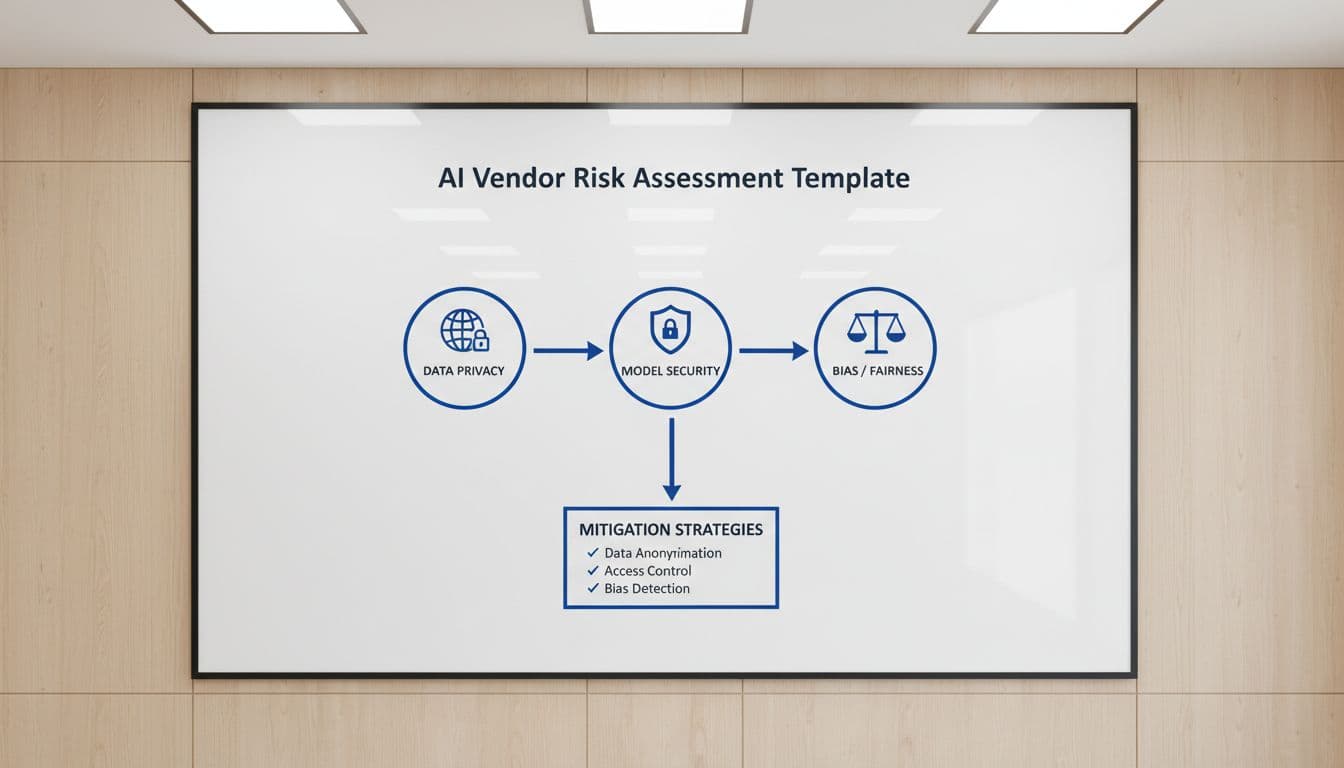

That means a normal SaaS questionnaire isn’t enough. In 2026, ai vendor risk assessment work has to test privacy, model behavior, contract terms, and change control, not only uptime and SOC reports. Start with a usable template, then route findings to the right reviewers.

Why procurement teams changed their AI review process in 2026

AI vendors now sit inside business decisions, not beside them. A writing assistant may seem harmless, yet the same vendor might retain prompts, switch model providers, or push updates that change output quality overnight.

Procurement pressure is also rising. Recent 2026 reporting shows 56% of companies faced a vendor breach in the past 6 to 12 months, while AI supply chain attacks keep growing. As a result, teams want proof of model security, bias testing, and incident response before purchase, not after rollout.

Rules are pushing the same way. Buyers increasingly map vendors to the NIST AI Risk Management Framework and check European expectations against the EDPS AI risk management guidance. If you buy for public sector or regulated use cases, those references shape contracts, audit rights, and approval paths.

If a vendor can’t show evidence, treat the control as missing.

This is also why modern due diligence asks about model providers, subprocessors, and training rights. Good background reading from ThirdProof’s AI vendor assessment guide shows how far AI review has moved beyond ordinary SaaS checks.

A copyable AI vendor risk assessment template

Use this as an intake form, a diligence worksheet, or a contract review aid. Score each row on a 1 to 5 scale, where 1 means no acceptable control, 3 means partial control, and 5 means documented control with current evidence.

Here is a practical template you can copy into a spreadsheet or intake tool.

| Risk area | Questions to ask | Evidence to request | Risk level | Owner | Scoring guidance |

|---|---|---|---|---|---|

| Data privacy | What data enters the tool, is it used for training, and how is it deleted? | DPA, data-flow map, retention schedule | H/M/L | Privacy, Legal | 5 if no training on customer data and deletion is verified |

| Model security | How are models, APIs, prompts, and admin access protected? | SOC 2 Type II, ISO 27001, pen test, red-team summary | H/M/L | Security | 5 if layered controls and recent testing are shown |

| Third-party and subprocessor risk | Which foundation models, clouds, and subprocessors are used, and can they change? | Subprocessor list, dependency inventory, notice clause | H/M/L | Procurement, Security | 5 if inventory is complete and changes require notice |

| Compliance | Which laws and standards does the vendor map to? | Control matrix, ISO 42001 or similar, DPIA or AIA summary | H/M/L | Legal, Compliance | 5 if obligations are mapped by use case and region |

| IP ownership | Who owns inputs, outputs, fine-tunes, and feedback? | Contract terms, IP indemnity, training-use restrictions | H/M/L | Legal | 5 if customer rights are clear and indemnity is strong |

| AI governance | Who approves AI use, monitors risk, and signs off on exceptions? | AI policy, governance charter, model cards | H/M/L | Compliance | 5 if governance is formal and active |

| Explainability | Can users understand why the system produced an output? | Explainability docs, user guidance, model limitations | H/M/L | Compliance, Business | 5 if limits and reasoning can be explained in practice |

| Hallucination risk | How does the vendor test false outputs and unsupported claims? | Evaluation results, benchmark reports, grounding design | H/M/L | Business, Security | 5 if error rates are measured and controlled |

| Human oversight | When must a person review, approve, or override output? | Workflow diagram, role controls, kill-switch process | H/M/L | Operations | 5 if human review is built into high-impact steps |

| Incident response | How are AI incidents, data leaks, and harmful outputs handled? | IR plan, notification SLA, incident history | H/M/L | Security, Legal | 5 if AI-specific response and fast notice exist |

| Model updates and change management | How are model changes tested, logged, and rolled back? | Release notes, change log, regression tests | H/M/L | Security, Operations | 5 if updates are versioned and revalidated |

| Data residency | Where is data stored, processed, and supported? | Region map, SCCs, hosting options | H/M/L | Privacy, Legal | 5 if residency choices and transfer controls are clear |

| Bias and fairness | How does the vendor test for harmful bias across groups? | Bias test reports, monitoring metrics, mitigation plan | H/M/L | Compliance, Business | 5 if tests are recurring and tied to remediation |

| Business continuity | Can the service recover fast, and can you exit cleanly? | BCP/DR plan, RTO/RPO, export process | H/M/L | Procurement, IT | 5 if recovery targets and exit rights are documented |

The table works best when you separate inherent risk from control strength. A low-risk writing aid can pass with lighter evidence. An AI agent handling customer data or making employment, financial, or health-related recommendations needs a deeper review.

How to run cross-functional review without slowing the deal

One owner can’t carry this alone. Procurement should run intake, collect evidence, and track red lines. Security should validate technical controls. Legal reviews IP, liability, data rights, and subprocessor terms. Compliance checks use case restrictions, fairness controls, and regional obligations. The business owner should test output quality and human review steps.

Stage the work by use case. First, screen for data sensitivity, autonomy, and impact on people. Then send only higher-risk vendors into deep review. This avoids dragging every pilot through a full audit.

Many teams also treat AI suppliers as a living chain, not a single vendor. That’s the core point in AI supply chain risk guidance. If your vendor depends on another model provider, that dependency belongs in the file and the contract.

Scoring and prioritizing findings

A simple scoring model works well. Rate inherent risk from 1 to 5. Rate control strength from 1 to 5. Then flag any hard stops, even if the average score looks fine.

Common hard stops in 2026 include:

- No clear ban on training with your data or prompts

- No notice rights for subprocessor or model-provider changes

- No AI-specific incident notice or weak breach terms

- No human oversight for high-impact outputs

For example, an internal meeting-summary tool may land at medium inherent risk and pass with standard security proof. On the other hand, an autonomous support agent with external actions, prompt retention, and vague update rules should trigger remediation or rejection.

Make the template part of intake, not an afterthought

A strong ai vendor risk assessment doesn’t reward polished answers. It rewards evidence, version control, and contract terms that still work after the pilot ends.

If the vendor can’t document the control today, score the gap now and decide with eyes open. Then turn this template into your standard intake form before the next AI purchase lands on your desk.